Hate is No Game: Hate and Harassment in Online Games 2023

The "Hate is No Game: Hate and Harassment in Online Games 2023" report by the ADL Center for Technology and Society highlights the pervasive issue of hate and harassment in online gaming, affecting an estimated 83 million out of 110 million players in the U.S., with three out of four young people experiencing harassment.

The Gaming Safety Coalition Announcement

The Gaming Safety Coalition has been formed by Keywords Studios, Modulate, ActiveFence, and Take This to enhance the safety and integrity of online gaming communities through Trust & Safety best practices, innovative tools, and targeted research.

Reddit rolling out AI bouncer to halt harassment

Reddit is implementing a new Large Language Model (LLM)-powered harassment filter to aid its volunteer moderators in combating online harassment, discovered in a recent APK teardown of the Reddit app for Android.

National Centre for Missing & Exploited Children reveals devastating toll of abuse ignored by tech giants

Big tech companies are criticized for not doing enough to address the surge in online child sexual exploitation, with 32 million reports of such content on major platforms indicating a broader issue beyond the reported cases.

Sweet Baby Inc. Doesn’t Do What Some Gamers Think It Does

Sweet Baby Inc., a narrative design company, has become the target of a misinformation campaign by a segment of the gaming community that accuses it of forcibly injecting diversity and altering the storylines of popular games like Alan Wake 2, God of War Ragnarok, and Suicide Squad: Kill the Justice League.

Court Weighs Whose Freedom of Speech Is at Risk in Content Moderation Fight

The U.S. Supreme Court is currently deliberating over content moderation laws in Texas and Florida, with a focus on the potential conflict between protecting users' freedom of speech and safeguarding the platforms' rights to moderate content under the First Amendment.

Business Ethics: Bring Human Values to AI

The article highlights the need for companies to embed ethical guardrails and values into AI development processes, addressing challenges in value definition, programming, compliance, partner alignment, feedback incorporation, and preparation for unexpected behaviors.

AI algorithms pushing content 'romanticising' suicide to children, Oireachtas Committee hears

Social media algorithms are increasingly exposing children to harmful content, including material that romanticizes suicide, a concern highlighted in a recent Oireachtas committee meeting in Ireland.

Are social media apps ‘dangerous products’? 2 scholars explain how the companies rely on young users but fail to protect them

In a Senate Judiciary Committee hearing, Meta CEO Mark Zuckerberg faced criticism for social media's role in harming children, with Sen. Lindsey Graham labeling social media platforms as dangerous products.

Fake explicit Taylor Swift images show victims bear the cost of big tech’s indifference to abuse

AI-generated explicit images of Taylor Swift became viral on X (formerly Twitter), showcasing the misuse of generative AI to create non-consensual sexual imagery of individuals without their consent, leading to widespread outrage and a delayed response from the platform.

Call of Duty uses AI to detect 2 million toxic voice chats

Activision, the developer behind the Call of Duty franchise, implemented an AI voice moderation software that detected over two million toxic chats since its introduction in August 2023.

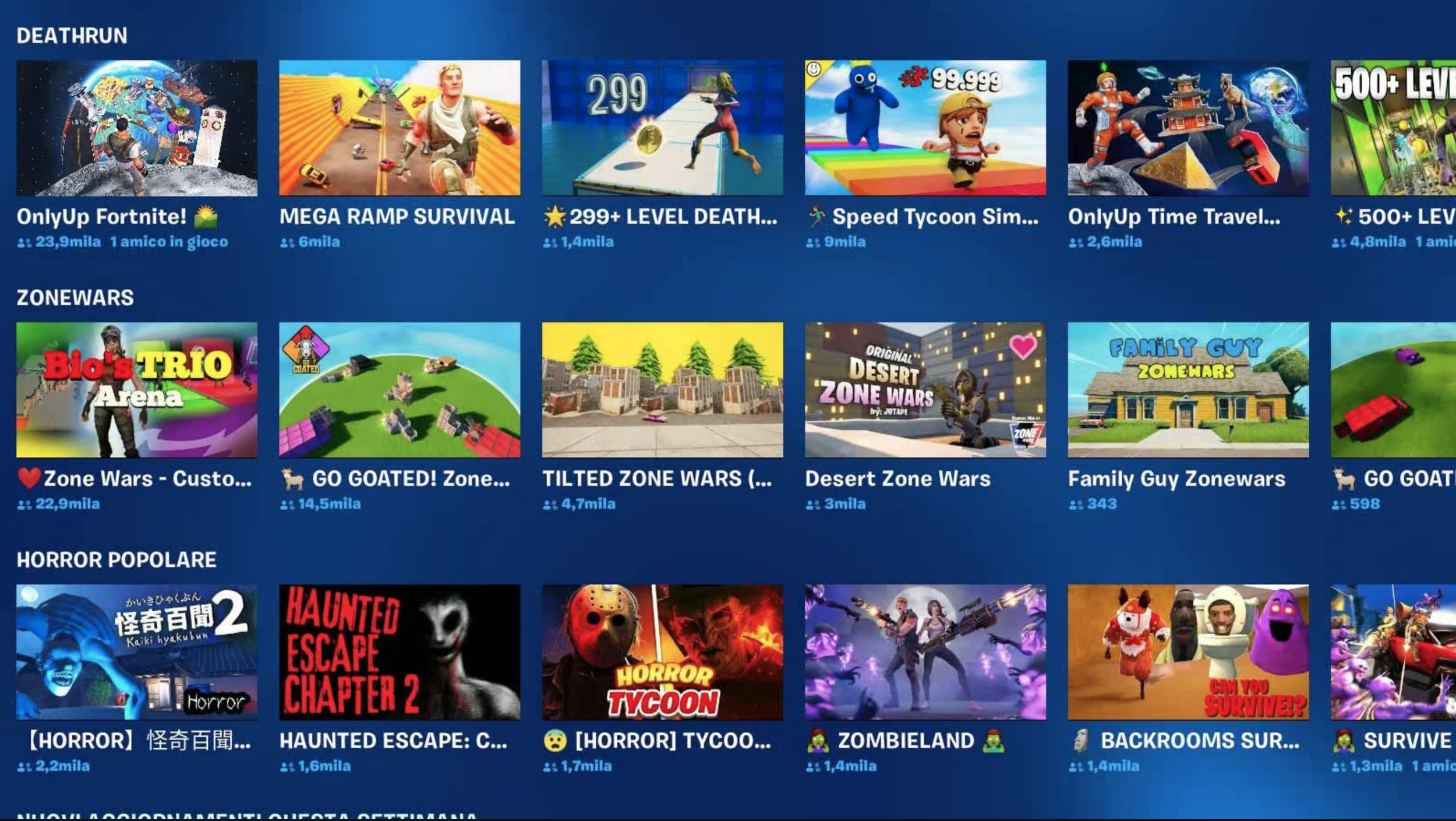

Fortnite’s Creative Islands have a serious content moderation issue

Fortnite's Creative Islands are facing a significant content moderation problem, with a proliferation of low-effort experiences, copyright violations, AI-generated images, and offensive content.